This post has already been read 78415 times!

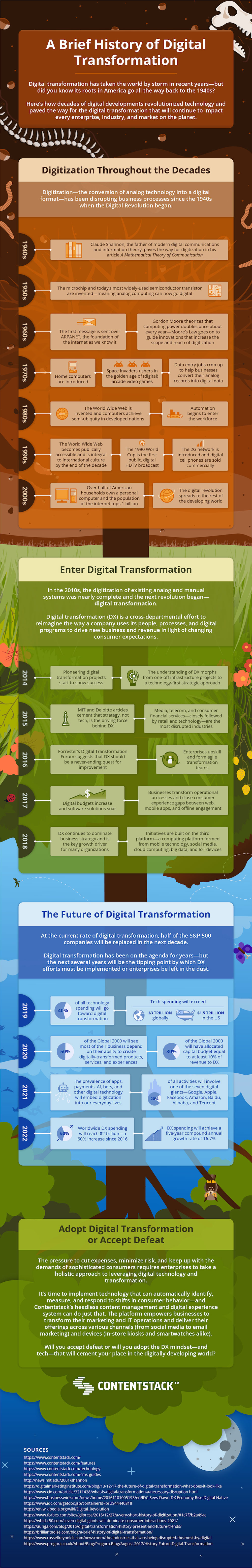

In 1948, the American mathematician and engineer Dr. Claude Shannon published A Mathematical Theory of Communication—an article that would later earn him the nickname “The father of modern digital communications and information theory.”

Why? Because, without it, we wouldn’t have the information theory upon which the entire internet has been built (Thanks, Doc!).

But it wasn’t Shannon’s idea that singularly propelled the internet into existence. In fact, it was the invention of two very important pieces of technology just a decade later that, when viewed in the context of Shannon’s publication, would change the world as we knew it.

The late 1950s provided the fuel to really launch the computer revolution, with the invention of the semiconductor transistor and the microchip. And after that came a rapid evolution of digital technology... -Brent Heslop @Contentstack Share on XIn the late 1950s, the invention of the microchip and the semiconductor transistor that is still most commonly used today, enabled analog computing to go digital.

The rest is modern history.

First came ARPANET (glad we didn’t stick with that name), and Moore’s Law—which still guides technical innovations to this very day—in the 1960s.

When the 70s grooved onto the scene; home computers, Space Invaders, and data entry were all the rage, while the 80s ushered in the all-important World Wide Web and the eternal boogeyman: Automation.

By the time the early 2000s came to a close; cell phones, personal computers, and internet access had gone world-wide.

Recommended: How to Use Master Data Management to Drive Digital Transformation

And just when it looked like the digitization of manual and analog devices had reached complete saturation, everything transformed—again.

To find out how digital transformation grew from its 1940s roots to rock businesses throughout the 2010s and what you should be doing to prepare as it reaches a tipping point (spending in the trillions, anyone?) in the next few years – here is…

The Brief History of Digital Transformation Infographic

Recommended

- A Brief History of Digital Transformation - December 19, 2019

One comment

Comments are closed.